TechGen

Gadget

Store

(Example

Website Template)

Mauris blandit aliquet elit, eget tincidunt nibh pulvinar

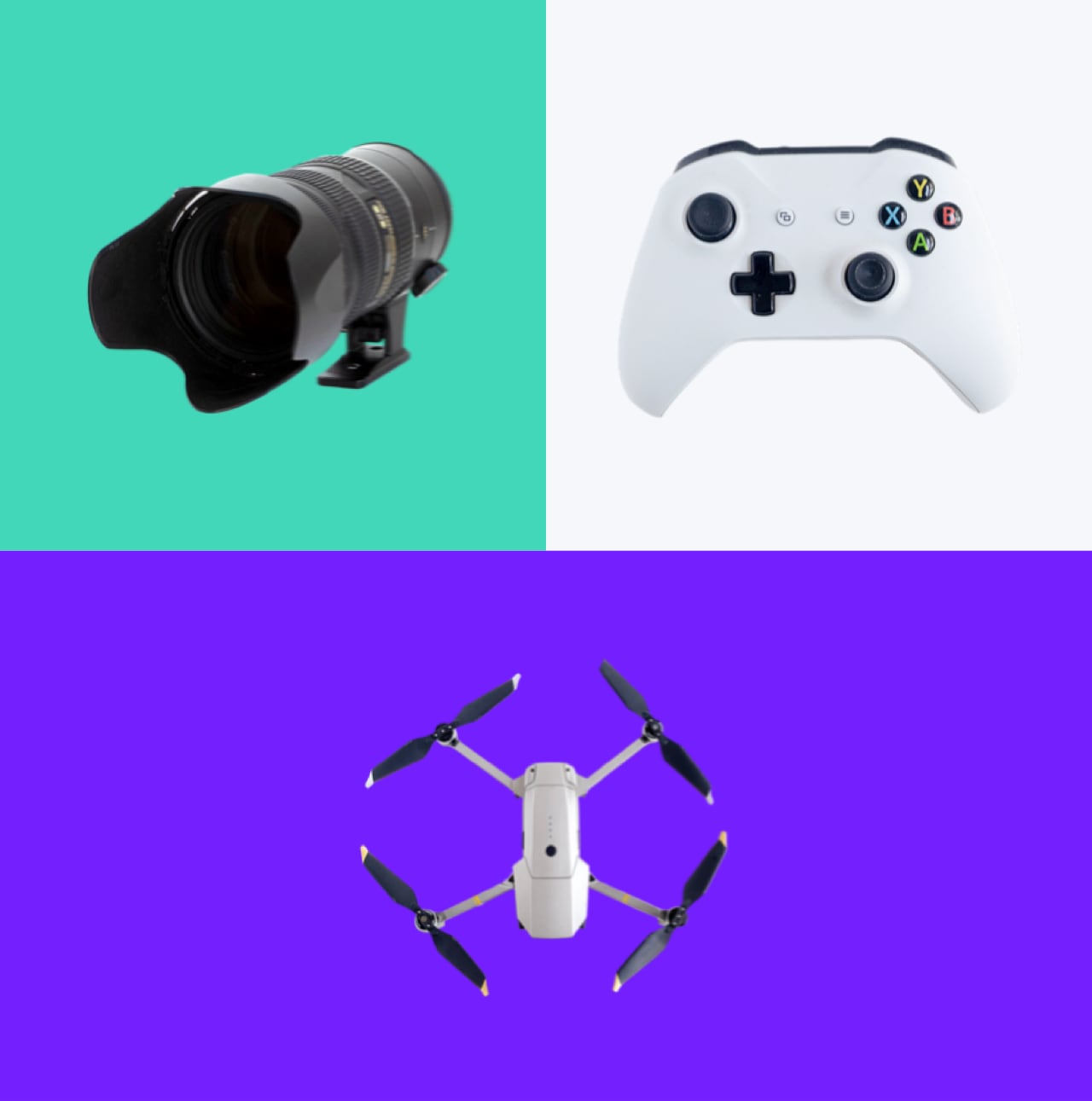

New Gaming Accessory Arrivals

10% Off All Photo Accessories

Camera Drones

Camera Accessories

Portable Audio

Something For Everyone

Mauris blandit aliquet elit, eget tincidunt nibh pulvinar a. Vestibulum ante ipsum

Shop By Brand

Company

Subscribe

Follow

1235 Divi St. San Francisco, CA 92351

Copyright © 2024Company